Table of Contents

Robots.txt file is one of the important aspects of SEO practices.

Robots.txt file is also known as robots exclusion standard protocol.

Robots.txt file gives directives to a google bot where to crawl or not.

Robots .txt is a file easy to understand, however even easier to mess up even if a single character is misplaced.

This small file is a part of every website, but many people are unaware of robots.txt.

If you are a blogger or a website owner, you should have a little bit of knowledge regarding the robots.txt file.

If you properly use this, it makes your lives easier by crawling efficiently and avoiding duplicate content. And I think everyone wants this. Don’t you?

I will tell you all about robots .txt and the legal way to use it properly. And it is not difficult to implement, trust me.

So are you gearing up to know one of the vital SEO hacks?

What is the robots.txt file in SEO?

Robots .txt file is for a search engine web crawler. It tells a google bot (search engine web crawler) which content to crawl or which should not.

Importance of robots.txt file

When it comes to search engines, a website has many pages to crawl, even if you do not think about its content and pages.

But we do not want to crawl all the pages or content of our website, so at this point, the robots.txt SEO practice makes it easier for Google bots to crawl the pages.

The robots.txt file is an SEO practice that gives the right signals to search engines and the Robots.txt file to communicate the crawling preference.

You can lock some of your website parts that you do not want to crawl by a search engine.

Most search engines are obedient, and google is one of them. It follows the instructions set by robots.txt.

If the search engine is about to visit your website, it first checks the robots.txt file for the instructions set by a website holder. As per the given instructions, google bot crawled the website.

How is the Robots.txt file?

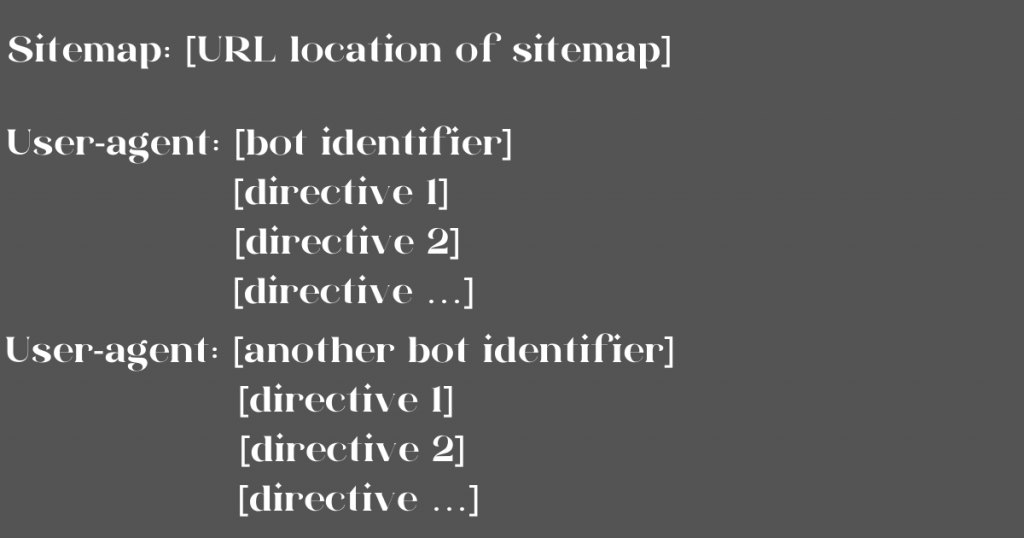

Robots.txt file has a syntax or a structure to write it in the right way.

Ohh! it looks like a terrible file if you have not seen it before. But if you try to read it carefully, it seems very simple.

Here you set the rules for search engines. It is syntax and pretty much good to understand.

Let’s have a look at all the rules in detail.

User-agent :

There are different search engines, and they recognize themselves as other search engines. You can put custom rules for each of them.

There are hundreds of search engines, but I have listed a few.

- Google search engine

- Google image search engine

- Binge

- Yahoo

Let’s look at the other code.

In this code, we see an asterisk used after the user-agent that means all search engines who visit the website and Disallow:/ means are not allowed to crawl the site.

In the second code, we see user-agent: Googlebot Allow: / means the Google bot can crawl the content.

You can set directives for multiple users agents as many as you want. If you set the directions for one user agent, for the second, and so on, then the directives of the first user agent are not applicable for the second one and respectively.

Or, if you want to allow all search engines crawler to crawl your website or content which you want to crawl, then you can put it like this-

User-agent: *

Allow: /

Directives:

Directives are nothing but the rules or instructions set by you to tell web crawlers which content to crawl or which is not to crawl.

There are two supportive directives-

Allow –

Use this directive to crawl a google bot or other web crawlers to access the permitted content or directories or pages or else you set.

Let say if you want to permit a google bot to crawl pages of a website, then you can write it as

User-agent:Googlebot

Allow: /page

Disallow –

Use this directive to restrict the permission to crawl the search engines for a particular link or page or post or for all posts or whatever website content you don’t want to crawl.

Let say if you do not want the search engine bot to crawl your posts; then you can set rules like this-

User-agent:*

Disallow: /post

Here you have restricted all search engines web crawlers to crawl your post.

If your website has many pages to crawl, it takes time for the search engine to crawl all content, and it can negatively impact your ranking.

Google bot has a crawl budget and divides into two parts 1. Crawl rate limit and 2. Crawl demand to know more about Googlebot’s crawl rate limit and crawl demand you can read here.

Let’s see the other directives from robots.txt-

Sitemap-

First of all, what is a sitemap? It has all the pages or content of your site that you want to crawl or index. Generally, it includes at the top of the robots.txt file or the bottom of your robots.txt file.

Use this directive when you want to tell your sitemap’s location to web robots to crawl or index.

For an instance

Sitemap: https://www.domain.com/sitemap.xml

User-agent: *

Allow: /blog

Or

User-agent: *

Allow: /blog/post-title/

Sitemap: https://www.domain.com/sitemap.xml

Generally, the sitemap file has an extension of the .xml

For example www.sumedhak.com/sitemap.xml

You can submit your sitemap through the Google search console.

There are some unsupportive directives that Google does not support

I just listed it for you

- Crawl-delay- You can ask the web crawler to crawl the specified content by mentioned delay time. For example, say crawl delay: 5

It means 5 sec. But Google no longer supports this directive.

- No index- In September 2019, Google made it clear that it does not support this directive.

- Nofollow – This directive tells a web robot who visits your site that they do not follow the links on the mentioned pages, folders, or directories.

What is the need for having a robots.txt file?

Robots.txt file is a small file that sets rules for your website that which part of the website is to crawl and index and which is not.

It makes the robot bot crawl your site more effectively and efficiently.

It helps to prevent crawling duplicate content, Prevent server overload, and keeps sections of the website private.

How to find the robots.txt file?

It is easy to find the robots.txt file if you have already. To find the robots.txt file, type your website address followed by robots.txt. Do Not confuse; it should write like this-

https://sumedhak.com/robots.txt, then enter, you will find the file.

You will find a file something like this.

Note: This is a small part of the robots.txt file.

How to create a robots.txt file?

To create a robots.txt file, you need any plain text editor (e.g. notepad)

The file must be named robots.txt only.

You can have only one robots.txt file for your site.

You should put the file in the root directories of the website host to which the file applies. https://www.sumedhak.com/robots.txt

Robots.txt files cannot be in subdirectories. https://sumedhak.com/pages/robots.txt

This file can apply to subdomains of your domain. For example,

https://sumedhak.com/robots.txt or subdomains https://sumedhak.com:8181/robots.txt

Once you form the robots.txt file on a plain text editor, add rules to your file. Rules are nothing but instructions.

There is the syntax to write this file as we see it above, but here is the document where google explained it very well.

For instance, the basic syntax is

User-agent: *

Disallow:

It means you have mentioned the sitemap location at the top. Then user-agent here you have

put (*) indicates you allow search engines who visit your website. Disallow:

Here nothing after Disallow means you want to crawl your entire website.

It is the basic syntax of writing a robots.txt file. By using other directives, you can instruct the web robot what part of your website you want to crawl or not.

Examples of Robots.txt file –

You can access the robots.txt file by putting the URL https://www.example.com/robots.txt.

You can access this file for any website; if one of the website’s robots.txt files fulfills your requirements, you can copy-paste it into your text editor and save it in a root directory of a website.

Here are some examples of robots.txt file

Access for all web robots for all

User-agent: *

Disallow:

No access for all web robots

User-agent: *

Disallow: /

Block subdirectory for all web robots

User-agent: *

Disallow: /folder/

Block subdirectory for all web robots with allowing one file

User-agent: *

Disallow: /folder/

Allow: /folder/post.html

Block one file for all web engines

User-agent: *

Disallow: /a-file.pdf

These are some examples of robots.txt files, but one can customise the file as per requirements.

Conclusion –

For a new non-technical reader, I know this article may seem horrible to understand at one reading. But if you try to understand the syntax and the directives, it is easier to grab.

But the small file is a significant SEO hack.

A well set up robots.txt file helps to increase your website visits. And show your content in SERPs in the best manner.

Practices of using the Robots.txt file can make remarkable changes. It is my experience.

So what is your experience?